"Screen Time" Needs a New Tune

Composing a new melody with students and their screens.

The New York Times recently reported on the growing unhappiness among students and parents with education technology:

Los Angeles parents are fed up with schools loading up students with laptops and tablets, and assigning schoolwork on a slew of apps.

Some families, who had decided against giving their children screens at home, told school board members that they were appalled to find young students using school-issued devices — even in kindergarten. Some parents complained that their children were able to play video games or watch social media videos during school.

The edtech industry ignores at its own peril just how disenchanted students have become with their devices, especially after virtual schooling through the pandemic. Before the pandemic, it often felt novel and exciting to unload the laptop cart. Students got a little more dopamine and gave a little more attention simply because of screens.

Several years later, kids feel very differently. You can see the difference even when news outlets report on classroom use of artificial intelligence, surely the buzziest new education technology. Those articles invariably contain rapturous descriptions of personalization, dynamism, and the future. Yet you’ll see, without fail, a field photo of kids looking like they have been dosed with a veterinary-grade tranquilizer.

Edtech needs a new melody.

Teaching is the work of composition. Teachers compose the resources in a classroom—including education technology, but also paper, textbooks, questions, explanations, whiteboards, other students, the physical space, etc.—into experiences that help students learn. I find it helpful to think of those resources as musical instruments and their composition as a melody.

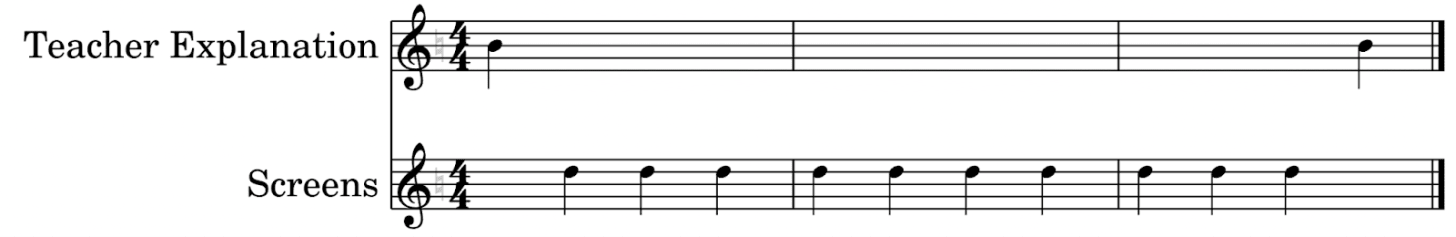

In many classrooms, students find the melody pretty dull—regardless of the teacher’s use of education technology. Few would argue this is a pleasing melody, for example. Every note is pure teacher explanation for a 20 minute segment of class.

Here is a different melody. Many teachers are enthusiastic about whiteboards and their potential for quick formative assessment. The teacher poses a brief question; students answer on whiteboards; the teacher responds to the class results with an explanation or summary followed by another question.

In that melody, the students hear several different notes—whiteboards, teacher questions, teacher explanations—all played to direct student attention towards learning.

Digital technology frequently produces as monotonous a melody as a teacher speaking endlessly in front of a blackboard. In the sort of experience reported in The New York Times above, you’ll often see the teacher explaining a task, then sending students off to work by themselves on their computers, often in silence, after which the teacher collects the laptops and ends the activity. The experience is digital, but the music is still a dirge. These devices are some of humanity’s most incredible achievements, but when students use them in schools they often hear only two notes—teacher explanation and screens.

We must learn other melodies for education technology. As one example, I taught a class last week here in Oakland, CA, using our curriculum and I can tell you that kids were on devices for no more than five minutes at a time before I brought them together to a) set up the next task, b) ask selected students to share their ideas, c) ask the class to help settle a dispute, d) do a closing assessment on paper, etc. The composition looked a lot like this.

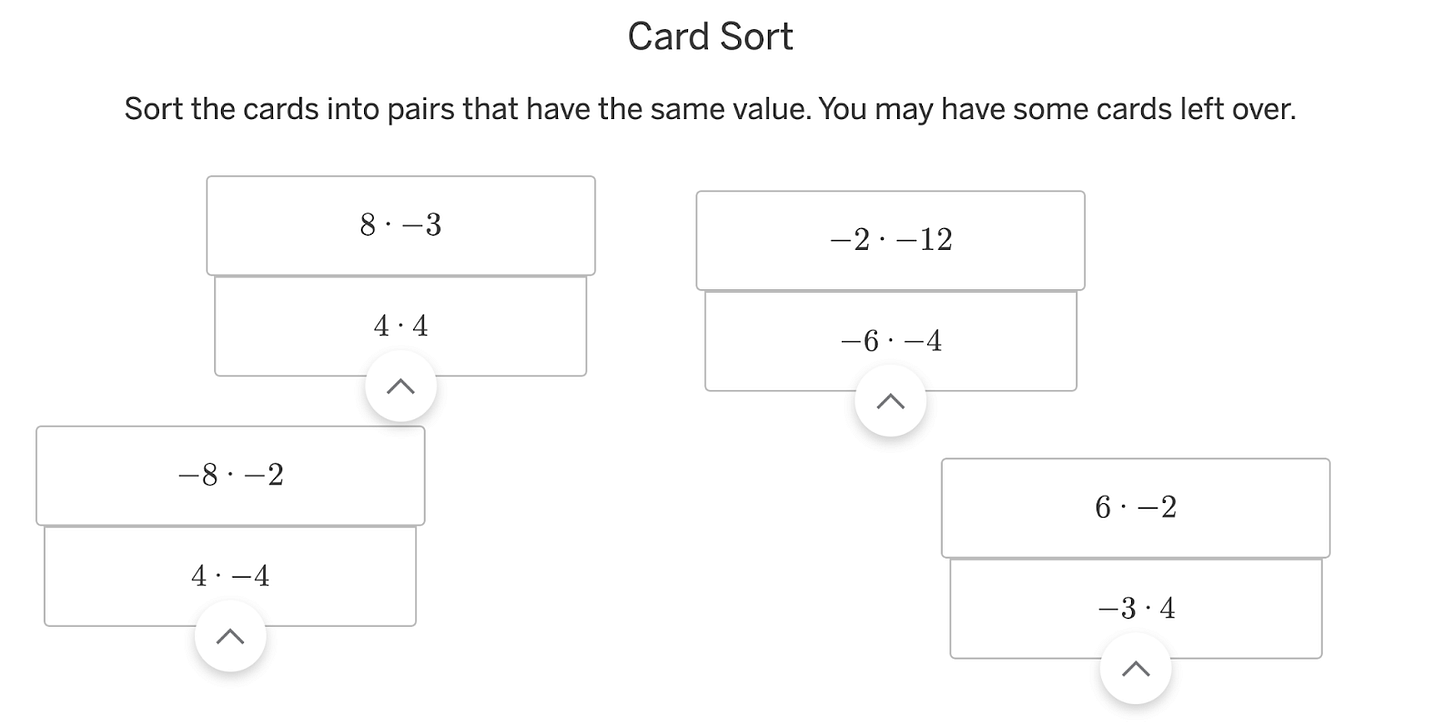

In one of my favorite moments, students heard multiple notes simultaneously—a chord. Students were matching different sets of cards together to make equal products.

There were hundreds of digital cards around the classroom. With the push of a button, the software analyzed which card was the hardest for the class to group. I told the class, “This card is our hardest. Show your neighbor where you have placed it. See if you agree.” Students passed their laptops across the table to their classmate as an object of conversation just as you or I might a coffee table book.

This is a challenging way to teach, in part because computers incur heavy switching costs. It takes quite a lot longer to log into a laptop than it does to “log into” a paper handout or a class conversation. As we segued from computers to a class conversation, I begged the kids to only close their laptops halfway, because if they closed it all the way, the district laptops automatically logged them out and we’d lose significant time logging back in. Those costs all mean education technology needs to bring more value to a learning experience than any analog substitute. In my case, I used a digital card sort because it could find out the most challenging card with speed and accuracy that I can’t match on my best day with paper cards.

“How much screen time is too much?” is an important question, but just one of many. We should also wonder what students do with their time on screens and what purpose that work serves. With so much edtech today, the instruments are shiny and new but the melody is still monotonous. We should wonder how we can play a more interesting melody with computers in class, kids switching in and out of them as easily as switching between people in a conversation, between pages in a book, between instruments in a symphony called learning.

Love the point you are making and the metaphor, but I don’t see the actual activity working easily. Too much shifting and opportunity for lost learning in that shift. I think we could easily create all the cards, print them out, and have the kids work in groups to answer the question; then we could ask them what they believe AI predicted, and why (with a quick writing of two or three sentences). After that, we could “reveal” what AI selected (a la “Survey Says…!!!). Students could analyze that one and compare it to what they had predicted and to each other’s predictions. I think that would be more manageable; what do you think?

Wonderful metaphor. You mentioned AI for a hot second. PBS NewsHour reported last night about the plight of higher education (cost outweighs perceived benefits of better employment). At the end of the segment, I think they said that employers wanted students with AI capabilities. It’s a conundrum. My husband is 60 and works for a financial institution. Over the course of about 35 years of work, he has learned through experience how things work. He now uses AI at work to replace the work that people used to do…like lower level jobs, where a person would learn the business by doing the entry level work. My husband can use AI effectively because he had 35 years in the industry. How are recent college grads with no real world experience supposed to know what to do with the AI? How are they going to know if what they are doing with AI is even valid? There’s a void.