Why OpenAI’s New Math & Science Simulations Don't Work

The learning is frictionless, which is the opposite of what learners need.

OpenAI has done something that is, once again, very neat, but not useful for novice learners:

Research suggests that visual, interaction based learning can lead to stronger conceptual understanding than traditional instruction for many students. When learners can manipulate variables and instantly see the effects, they may be better able to internalize the relationships behind mathematical and scientific concepts.

Type particular questions about math and science into ChatGPT and you’ll see its usual chat, but also an interactive visual display where students can drag sliders back and forth and watch a diagram change. The experience feels frictionless, which maybe sounds great if you work in software, but this is a liability for novices, not an asset.

The OpenAI website contains examples from math and physics. Drag the sliders around yourself. The experience is silky smooth. You can make diagrams morph and dance almost without thinking about them, which is exactly the problem.

Novices need more friction, not less. Learning results from friction. It is a grinding of gears. You learn when you make your old knowledge and new knowledge lock together, reconciling what you know with what you knew. And at every point in that process it is easy to tell yourself, “Yes, I have done it. I have internalized the relationships behind mathematical and scientific concepts,” even if you haven’t.

When a novice uses OpenAI’s Pythagorean Theorem simulation, for example, they are much less likely to say, “Ah, I now understand that the sum of the squares of the legs of the right triangle is equal to the square of its hypotenuse” and much more likely to say, “Ah, when I move my hand right and left the square gets bigger and smaller.” Without friction, the superficial insight replaces something substantial. The empty carb replaces the whole grain.

Education research offers us some insight here. Lots of studies demonstrate that immediate feedback is more helpful than delayed feedback, particularly when we’re talking about giving the same test results immediately or several days later. But OpenAI’s diagrams are different. They have more in common with the early LOGO microworlds, where you’d make a turtle move around at your mathematical command. Simmons and Cope (1993) found that, in those worlds, students were more likely to use procedural strategies like trial-and-error when they received immediate feedback than delayed feedback. Kids needed friction to cross the threshold from superficial to deep understanding.

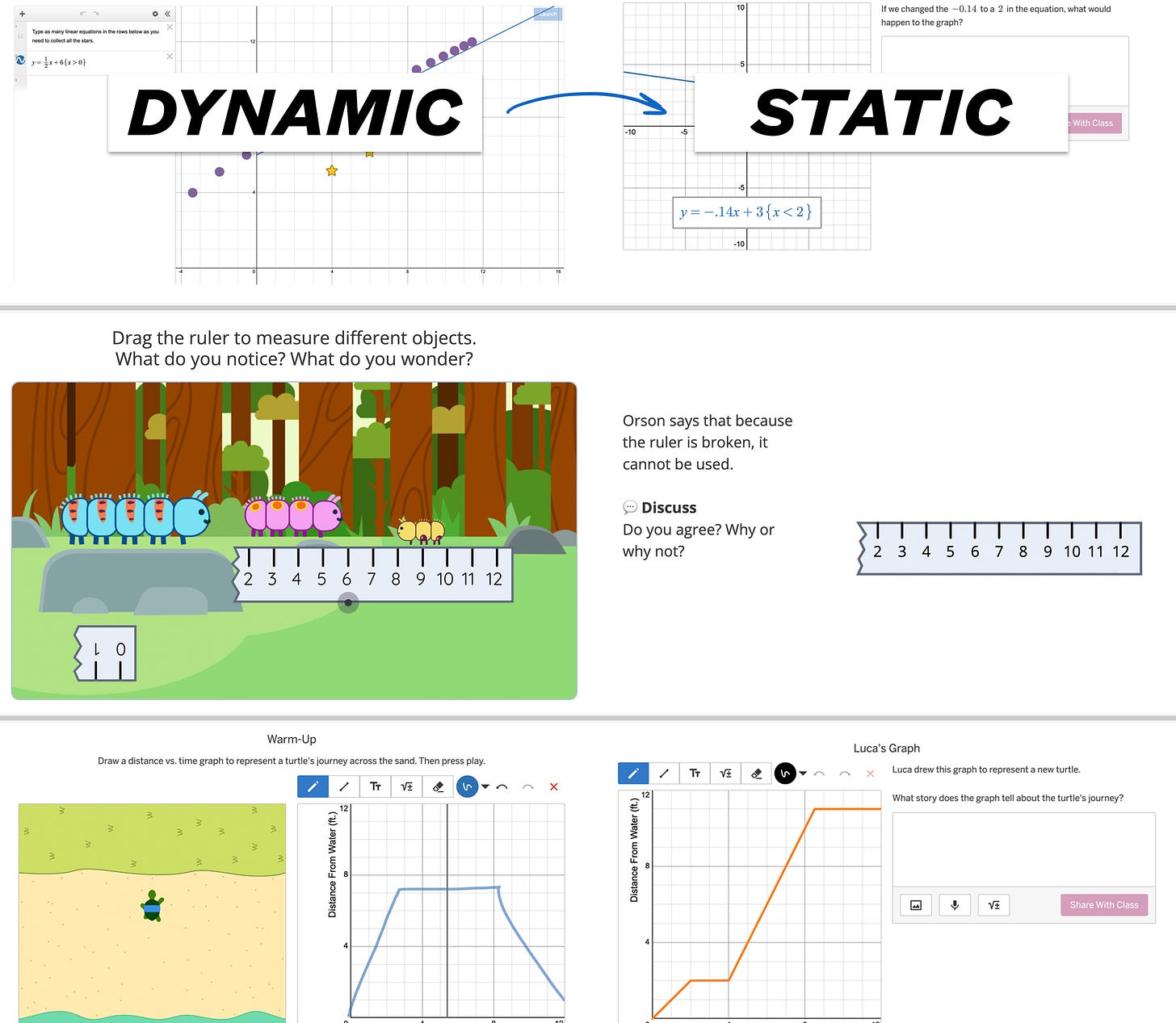

That finding is why, in our curriculum, we offer students a dynamic interactive and then follow it with something quite static, a question that asks students to slow down, to go beyond their easy conclusions about the interactive, a question that introduces more friction not less. When answering those questions, novices benefit enormously from an agent telling them, “This is good, but let’s think harder about what’s going on.” And, in 2026, there is no agent more effective at coaxing students to endure and engage in that friction than a human teacher. AI can assist those teachers in that work, certainly, but it is still humans who help humans do hard things.

With these interactive diagrams, OpenAI has yet again offered the world a great gift, though perhaps not the one they intended: an opportunity to better understand the challenges of learning, the sophistication of teaching, and the value of human relationship. These interactive diagrams are something that AI can easily do, but they are not, on their own, something that students actually need.

Featured Comments

Timothy Burke on AI saving teachers time:

But also because there isn’t any evidence of the repurposing of the time saved. That is the yawning void in the discourse of AI boosters: exactly what are they imagining that the saved time will be redirected towards that is a more valuable activity for trained professionals? The reason they don’t want to concretize it is because even if AI automates certain tasks effectively (a big if) AI boosters don’t actually know enough about any workflow or work processes in any existing profession to envision what professionals would rather be doing nor do they understand anything about what the obstacles to doing that work actually are.

So much of the tech and business community believes teaching time is fungible like money. Save money on your car insurance and spend it literally anywhere else. Save time grading papers after school and ... you can’t actually spend that time in school. If AI saves teacher time, well that’s great on its own, but too many people assume that time can transfer from outside to inside the classroom.

Upcoming Presentation

San Diego County friends: on Friday, March 27th I will be at the San Diego Educator Symposium and Publisher Fair hosted by Amplify. Space is limited so register by Friday, March 20, if you’d like to attend.

Odds & Ends

¶ Wess Trabelsi, a lament about education today:

[Generative AI] is crashing into our classrooms at the exact moment when traditional assessment proxies are broken, and more importantly, when students’ hopes about the future are incredibly brittle. We are asking students to willingly engage with the “desirable difficulties” of learning, in the hope that they develop the skills required to direct and audit AI output. Except, we can’t promise them a stable job or a predictable future in return, and they know it.

¶ Freddie deBoer, along similar lines as Trabelsi:

All of this education [in crisis] discourse (all of it, all of it, all of it) is downstream of the reality of the neoliberal turn, globalization, and deindustrialization. We decided that we didn’t want jobs that don’t require a college degree anymore, many people are not academically equipped to get a college degree, and so we manufactured this “crisis.”

We need to name clearly what teachers can and can’t do, and then to wonder why different groups of people tell teachers, “You can fix poverty.” Freddie deBoer makes a compelling argument that a) this isn’t something education has ever done anywhere on a national level, b) there are plenty of other levers—redistributive economic policy and social welfare, for example—that have.

¶ Get in the car, folks. It’s time for a rebrand!

Precision learning is fundamentally different [from personalized learning]. It would enable educators to use technology, data and evidence to identify exactly where a student is struggling, which interventions are most likely to work and how to deliver them effectively and equitably.

Call the program whatever you want. My questions will always be the same: a) what math does it let kids do? b) how does it make use of human relationship? With personalized learning, those answers have been a) math that reduces to a number or multiple choice response, b) before and after the program but not during.

¶ Kip Glazer is a helpful voice—the principal of a large school in the tech center of the world; forward-thinking with AI; realistic about the work of teaching, learning, and leading. She has been putting edtech companies on notice this whole month:

The best tool in the world fails when the workflow around it is broken. When it does not talk to your SIS. When it adds three steps to a process that already had too many. When it was designed without accounting for the fact that a teacher’s day is not a controlled environment. It is a living, unpredictable, deeply human one. [..] Human variability is not an edge case. It is the entire job.

¶ 🎉 S/o to my colleague Ana Torres for winning Best Female Hosted Podcast from the American Writing Awards. Beyond My Years is a great time.

Has any student ever gained real insight from the Pythagorean theorem diagram with the squares along each hypotenuse? I’m baffled by this every time I see it — the union of two squares is not a square! Is it supposed to be obvious to everyone at a glance that their areas add up in the right way?

Appreciate this as always. Just wanted to respond to this part of the deBoer quote:

"Many people are not academically equipped to get a college degree."

Speaking from my experience teaching at a community college:

- The number of people who have the academic and intellectual capability to succeed in college is much bigger than some people seem to think it is. Student ability is not the functional limitation!

- Given the choice, many students will choose to work on subjects that they find personally meaningful, whether or not they are directly connected with career preparation. Student interest is not the functional limitation!

- The struggles that students experience in college typically have much more to do with challenging life circumstances than academic capability.

- The nature of students' challenging life circumstances, the impact they have on students' learning, and many of the difficulties involved in providing effective support are all downstream of systemic inequities and political decisions about resource allocation.

- Even within those systemic problems, there are things we can do to make college work better for more students without compromising what college should be, and most of those things begin with building human connections.