I Don’t Believe This Finding That AI Is Saving Teachers Six Weeks per Year

It's hard to figure out what's going on right now but this is not what's going on right now.

EdTechnical is currently announcing the winners of their forecasting competition, where entrants made predictions about five different questions in education. I was a judge for the “Teaching Profession” track which asked:

By the end of 2028, what percentage of non-interpersonal teacher activities (lesson planning, grading, and parent communication) will teachers routinely delegate to AI systems?

My own prediction here would have landed somewhere quite a bit south of 50%, mostly because I think the interpersonal and non-interpersonal tasks of teaching are pretty tough to disentangle. Wess Trabelsi won with a prediction of 65%. (Congrats, Wess. I liked your entry and described it to the other judges as “a wild ride.”)

Nearly every entrant, including Wess, relied on a survey from the Walton Family Foundation and Gallup that surveyed teachers on their AI use and time saved. The big finding of the report:

Teachers who use AI weekly save 5.9 hours per week — the equivalent of six weeks per school year. Currently, about three in 10 teachers are using AI at least weekly, with more frequent users experiencing greater time savings.

I want to offer a few reasons why I suspect that finding is wrong. In fact, if it is anywhere close to right, if this technology (which I regard as “neat”) can effectively shave off six weeks of teaching work (which I regard as some of the most taxing that exists) then I have drastically misunderstood AI or teaching or both.

Here is why I’m skeptical of the finding.

People aren’t great at self-reporting the time they save with AI.

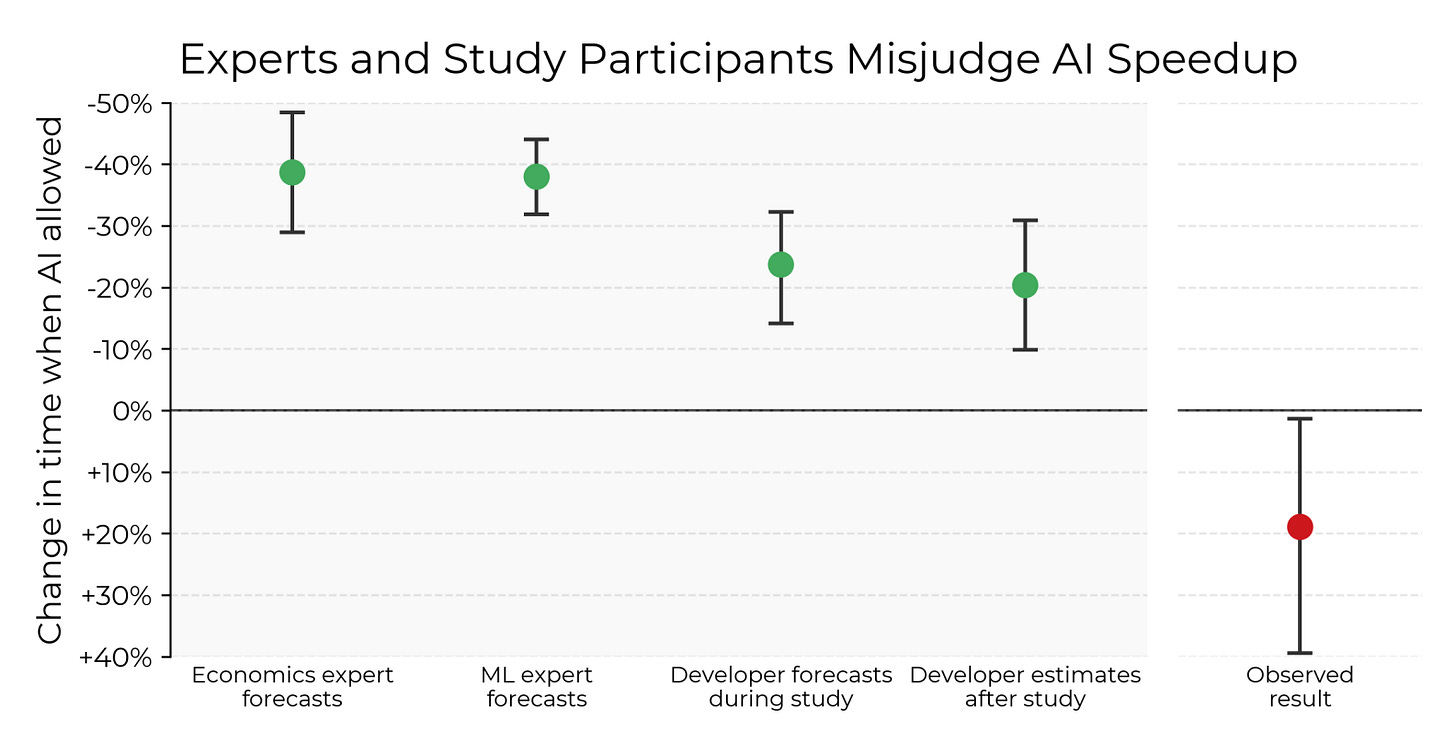

The Gallup data is entirely self-reported. Teachers were asked how often they used AI and how much time they saved on different teaching tasks. Meanwhile, the AI research firm METR went beyond self-reports with a similar study of software developers. They randomized a set of tasks between “AI allowed” and “AI disallowed” groups. They asked for self-reports of completion time, but they also measured actual completion time.

The AI-using engineers believed their tasks had taken them 20% less time than the non AI-using engineers when in reality it had cost them 19% more time. So I’m happy the AI-using teachers feel like AI has shaved off six weeks of their work, but the METR study should make us question self-reported data of this sort.

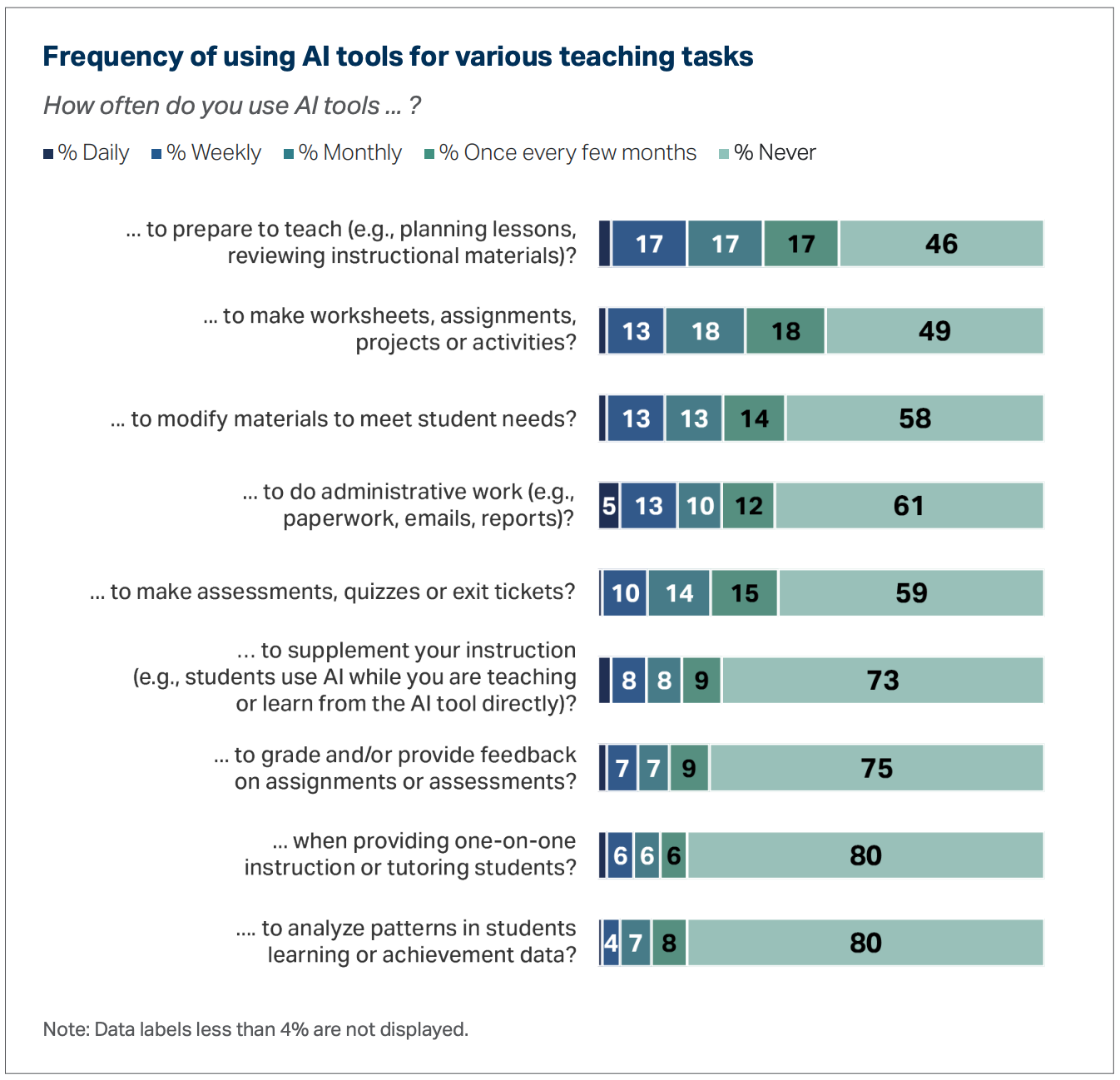

These sample sizes are quite small.

The most common response to the question, “How often do you use AI tools to do this teaching task?” was “never” for every teaching task surveyed. Only 4% of the 2,232 surveyed teachers described using AI weekly to “analyze patterns in students’ learning,” for example. That amounts to 90 teachers whose responses were averaged across other categories and then “multiplied by the number of contracted weeks per year (37.4, on average)” to get the time savings expressed as weeks. “Margins of error for subgroups are higher,” says the methods section, which I believe. Unfortunately, those margins were then multiplied by 37.4.

Okay also: saving time is not per se good!

Students seem to be saving tons of time these days using AI. Yet plenty of adults are more worried than excited about those savings, worried that students are saving time not doing work that they should do—not writing their early drafts of papers, not struggling to remember which solution method is most appropriate, not committing knowledge to memory.

We should worry similarly about teaching. Are we happy or sad that teachers are outsourcing their tasks to AI? Which tasks? It’s true that teachers can save time giving feedback on student writing by asking an AI to do it. If saving time were our only prerogative, we could save even more time by simply not assigning essays at all. Clearly, the more stuff AI can do, the more we need to wrestle with the question, “What stuff should AI do? Are there costs besides time that we should consider?”

Featured Comments

Michelle Kerr on last week’s teaching dilemma:

The tricky part isn’t explaining [whether the answer is “6-n” or “n-6”]. It’s getting them to stop and think about it before they answer. This is also the case with whether the slope is positive or negative or whether (x+3) has a zero at 3 or -3. It’s always about getting them to stop and take a beat before they answer instead of just mentally flipping a coin. The hard part is reminding them that they have the knowledge already if they just take a second to stop and tap into it.

Ryan Muller believes the MOOC comparison doesn’t account for AI’s vastly different technological power:

The scaling that has unrelentingly continued to extend its capability on ambiguous and long running cognitive tasks hits a brick wall against... teaching?

Just so I’m not misunderstood: yep, that’s exactly what I think. Humans have—to date!—needed other humans to help them do hard things they’d otherwise rather not do. So you can file teaching alongside therapists and gym trainers as professions where the impact of AI is going to be limited compared to the rest of the economy.

Upcoming Presentation

March 13. This month I’ll be giving a keynote address at California’s annual math conference in Bakersfield, CA. We’ll be talking about why and how we can restore creativity to math, a discipline frequently perceived as a creative wasteland.

Odds & Ends

¶ Congrats to Amplify’s math curriculum team for earning an all-green rating from EdReports for Amplify Desmos Math, a curriculum that is near and dear to my heart.

¶ You simply have to hand it to Pam Burdman sometimes. Perhaps you recall the thunderclouds above UC San Diego’s math results recently. The Wall Street Journal’s editorial board called it “A Math Horror Show” attributing increased enrollment in remedial math to “increased admissions of students from ‘under-resourced high schools.’” (UC San Diego made the same attribution.) But Pam used the Wayback machine and found another factor contributing to that increased enrollment, one which I haven’t seen reported anywhere else.

¶ Rick Hess interviewed me for Education Week. One Q&A:

RH: What’s the one big thing a school or system leader should know when it comes to AI and schooling?

DM: The introduction of generative AI has not changed the fundamentals of your work: getting absentee students back to school; making sure kids feel supported and known; communicating results and challenges with parents; creating a positive working environment for teachers; and keeping kids safe. For all of its power in the world outside of schools, generative AI has not transformed the reality of any of those challenges and may, in the case of student mental health, exacerbate them. The work is still the work.

¶ I love the lines John Warner quoted from How College Works.

The fundamental problem of higher education is no longer the availability of content, but rather the availability of motivation.

Human contact, especially face to face, seems to have an unusual influence on what students choose to do, on the directions their careers take, and on their experience of college. It has leverage, producing positive results far beyond the effort put into it.

If human contact is a lever, we can think of technology as one kind of fulcrum. We see different results for kids depending on where we put that fulcrum and what kind of force we apply to the lever.

¶ Michael Pershan is correct: “most people do not want this.” Downthread he writes:

Most kids are not sitting around wishing that they could just hunker down with a tutor. They would rather be in class with their friends.

You can try to convince people to want something they do not want. You can yell into the wind. You can swim against the river. But the most prominent boosters of personalized learning do not seem to engage meaningfully with the nature of the wind or the river, with the reality that most people are not like them, that most people do not want this.

¶ Related: Ben Kornell ran an interesting webinar on the state of AI tutors. Notably, Khan Academy’s Kirsten DiCerbo said, “I will tell you, we see more ‘IDK IDK,’ more passive kinds of interaction than we would like.” That checks out. Andrea Pasinetti, CEO of Kira, offered a useful summary of the “micro-interactions that happen between students and tutors that can pull students out of the illusion of tutoring.”

Any latency. A question of two to three seconds delay between when a student asks a question and when a student gets a high-quality answer can cause distraction, it can cause a student to move on, it can cause a student to lose their own context in terms of what they’re learning and why they’re asking a particular question. An incorrect answer. A duplicative answer. An answer that maybe is not leveled properly in terms of reading level or concept difficulty or an answer that presumes knowledge that the student doesn’t have can all have a very negative impact on engagement and ultimately learning outcomes.

¶ I gave a talk at UC Davis last week and loved seeing the Better Call Saul-style advertisements for legal advice for (alleged but let’s be real) AI plagiarists on the bulletin boards.

I wonder how much of the time "saved" by AI comes out of the work the teachers would ordinarily do outside of school, in which case it doesn't save six weeks of school at all-- it just reduces the length of the teacher work day so that it's closer to what the contract says it's supposed to be.

For me, there’s just a lot of work that I get done with AI that just simply wouldn’t have gotten done without it. The spiral review warmups I have my Calculus students complete each week would either not exist or they’d do a much worse job of systematically covering topics from throughout the year. They also wouldn’t be as effectively catered to the specific academic level of my students, and I’d have a harder time designing them to be data-informed.

I agree that if I was asked how much time I save doing this, I’d only be doing a rough estimation because I don’t know how long making this type of materials would take without AI. But, indicating that it was a big chunk of time would be quite true, and I think most teachers using AI regularly feel the same.

Do you think my students would better be served if I scoured the internet or spent a lot of time designing specific problems for them to review? Because my deep intuition at this point is that it’s a much more natural and effective workflow to start from learning objectives and use AI as a backwards design companion from those goals. Current models are really impressive for tasks like these!